By Holly Chik

Researchers say the system not only outperforms American competitors in solving problems, it can tackle an even tougher challenge.

A Chinese AI system has outperformed its US competitors in solving geometry problems at the International Mathematical Olympiad (IMO) level, taking less than half the time and using simpler computational resources, according to its developers.

Unlike existing models confined to problem-solving, the Chinese system can also generate mathematical problems – three of which appeared in a Chinese national team qualifying exam and a top Olympiad in the United States in 2024.

“We present TongGeometry, a neuro-symbolic system that discovers, proposes and proves IMO-level geometry problems through principled tree search,” researchers from the Beijing Institute for General Artificial Intelligence and Peking University wrote in the peer-reviewed journal Nature Machine Intelligence on Monday.

The developers of TongGeometry said the system “functioned more like a coach who both designs training problems and guides solution strategies, rather than merely a student who solves given problems”.

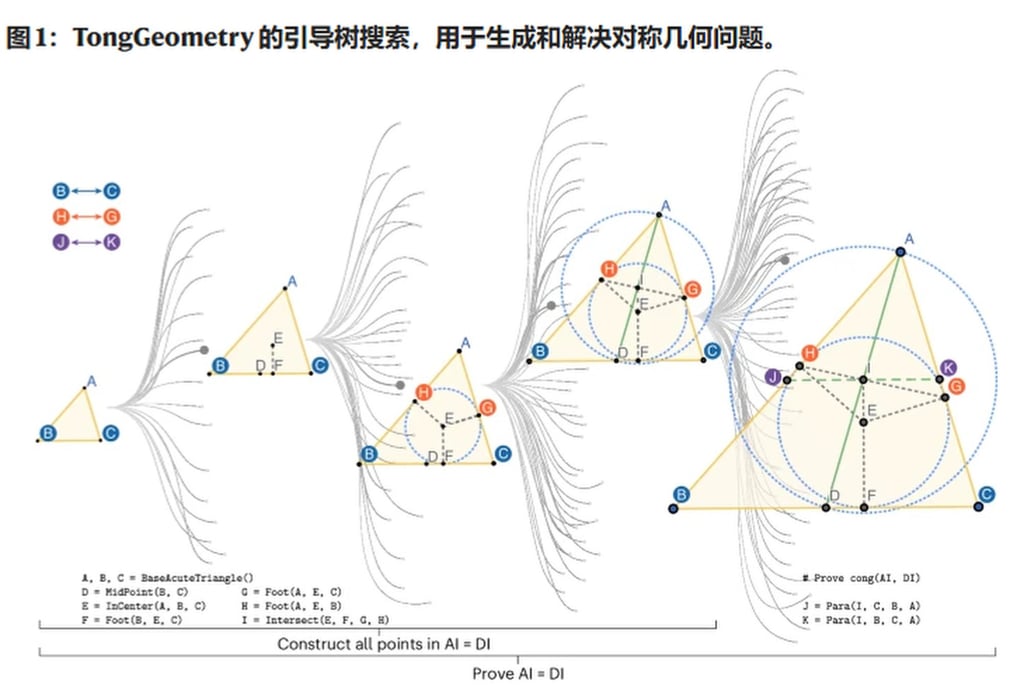

This diagram illustrates TongGeometry’s method for navigating tree-structured geometry spaces while preserving symmetry. Photo: Handout

Drawing on 196 past Olympiad geometry problems, the system generated 6.7 billion geometry problems that required auxiliary constructions.

Of these, 4.1 billion problems featured mathematical symmetry, “a quality highly prized in competitions”, according to the researchers.

The researchers then selected 10 problems suitable for maths Olympiads. They submitted four proposals to the 2024 National High School Mathematics League in Beijing, with one being selected as the competition’s only geometry problem.

Of the six proposals put forward for the 2024 US Ersatz Math Olympiad, two made the shortlist.

In contrast, AlphaGeometry, an Olympiad-level AI system for geometry developed by Google DeepMind and New York University, “successfully solved only three of our 10 proposals”, they wrote.

On its debut in 2024, AlphaGeometry solved 25 of 30 Olympiad-level geometry problems within competition time limits. This benchmark set consisted of problems from IMOs held between 2000 and 2022.

This performance rivalled that of elite human competitors, nearly matching the 25.9 average score typically achieved by IMO gold medallists.

Testing along the same benchmark, “TongGeometry became the first method to surpass IMO gold medallists, successfully proving all 30 benchmark problems”, according to the Chinese researchers. They added that the feat was achieved within 38 minutes using consumer-grade computing resources.

Play

“This achievement represents the first automated system to surpass the average IMO gold medallist performance on this benchmark,” they wrote, noting that “we do not claim TongGeometry surpasses an average IMO gold medallist in geometry generally”.

As for its computing resources, TongGeometry solved the problems on a consumer-grade machine with 32 CPU cores and one Nvidia RTX 4090 GPU in 38 minutes.

In contrast, AlphaGeometry took 246 CPU cores and four Nvidia V100 graphics cards to solve the problems within 90 minutes using “a hardware set-up generally unavailable to regular consumers”, according to the researchers.

The International Mathematical Olympiad is an annual global competition for high school students. Medallists show exceptional ability to logically navigate from axioms to conclusions, a task described as one of the most sophisticated forms of human reasoning.

“Geometry problems within these competitions have emerged as a critical benchmark for AI researchers, presenting formidable challenges due to their unique combination of formal logic and spatial reasoning,” they wrote.

Meanwhile, proposing mathematical problems was equally prized, the researchers added, saying it was arguably more challenging than solving problems in mathematics competitions.

“Formulating original, elegant problems requires not only mathematical mastery but also aesthetic sensibility, rarely captured computationally,” they wrote.

“Mathematical elegance, particularly symmetry in various forms, serves as a critical quality criterion in prestigious competitions.”

Source: chinese-ai-goes-next-level-geometry-top-us-maths-olympiad

Disclaimer

The views and opinions expressed in this article are solely those of the author and do not necessarily reflect the official stance of Kritik.com.my. As an open platform, we welcome diverse perspectives, but the accuracy and integrity of contributed content remain the responsibility of the individual writer. Readers are encouraged to critically evaluate the information presented.